Entries tagged 'code'

Using AutocompleteSelect in a Django Admin form

I was building an action for a Django Admin changelist view to allow associating models to a related model. But to select the related model on the form, a regular ModelChoiceField would load all of the possibilities to populate the <select> form field, which was pretty terrible when there are thousands to tens of thousands of options.

Within the change view of the model, you can just add that field to autocomplete_fields and Django has a nice built-in widget that uses a Select2-style form input. It is called AutocompleteSelect. I couldn't find any examples of someone using this widget outside of it being pulled in by autocomplete_fields but it ended up being surprisingly easy.

Here is a rough example of the form definition at the heart of the solution:

from django import forms

from django.contrib.admin.widgets import AutoCompleteSelect

from .models import Model, RelatedModel

class BatchAssociationForm(forms.Form):

related_field = forms.ModelChoiceField(

queryset=RelatedModel.objects.all(),

label="Related",

help_text="Select the object to relate to",

widget=AutocompleteSelect(Model.related_field.field, admin.site)

)No extra package necessary. I am using Django 5.2, and I have no idea if this will work with earlier versions.

A while back, I wanted to build a filter for a changelist admin that used the autocomplete widget for selecting the value(s) to filter on. Now that I cracked it for a form, maybe I should revisit that idea.

Just make it better

This access hatch on a sidewalk on Main St. in downtown Los Angeles used to have chipped concrete around the edges and the doors had a lot of flex to them when you walked over them. A few weeks ago, it was finally fixed up and now it looks clean, the doors don’t have any flex to them, and my near-daily experience of walking on that stretch of sidewalk feels a little bit better and safer.

Today, the website for the PHP Documentation Team was finally moved to a new host. Everything (or nearly so) related to the installation is on the appropriate repositories, it’s being served up over TLS, some of the code has been cleaned up, and the contribution guide has gotten more focused attention than it has had in several years.

None of this is perfect. None of it is done. But making things incrementally better is the kind of good trouble that I want to continue.

Into the blue again after the money’s gone

A reason that I finally implemented better thread navigation for the PHP mailing list archives is because it was a bit of unfinished business — I had implemented it for the MySQL mailing lists (RIP), but never brought it back over to the PHP mailing lists. There, it accessed the MySQL database used by the Colobus server directly, but this time I exposed what I needed through NNTP.

An advantage to doing it this way is that anyone can still clone the site and run it against the NNTP server during development without needing any access to the database server. There may be future features that require coming up with ways of exposing more via NNTP, but I suspect a lot of ideas will not.

Another reason to implement thread navigation was that a hobby of mine is poking at the history of the PHP project, and I wanted to make it easier to dive into old threads like this thread from 2013 when Anthony Ferrara, a prominent PHP internals developer, left the list. (The tweet mentioned in the post is gone now, but you can find it and more context from this post on his blog.)

Reading this very long thread about the 2016 RFC to adopt a Code of Conduct (which never came to a vote) was another of those bits of history that I knew was out there but hadn’t been able to read quite so easily.

Which just leads me to tap the sign and point out that there is a de facto Code of Conduct and a group administering it.

I think implementing a search engine for the mailing list archives may be an upcoming project because it is still kind of a hassle to dig threads up. I’m thinking of using Manticore Search. Probably by building it into Colobus and exposing it via another NNTP extension.

Surprise, it’s a new release of Colobus!

Because there’s nothing that quite says “hire me” like polishing your Perl bona fides, I have finally made a new release of Colobus, the NNTP server that runs on top of ezmlm and Mlmmj mail archives. (It was actually three new releases, I had to work out some kinks. More to come as I work out more in testing.)

The Mlmmj support and some of the other tweaks are really just pulling in and polishing changes that had been made to the install used for the the PHP.net mailing lists. There are also a few bug fixes I pulled in from Ask’s fork of colobus that the perl.org project uses.

I did add a significant new feature, which is a non-standard XTHREAD id_or_msgid command that returns an XOVER-style result for all of the messages in the same “thread”. The code to take advantage of this new feature for the PHP mailing list archives is on the way.

Writing documentation in anger

As I continue to slog through my job search, I also continue to contribute in various ways to the PHP project. Taking some inspiration from the notion of “good trouble” so wonderfully modeled by John Lewis, I have been pushing against some of the boundaries to move and expand the project.

In a recent email to the Internals mailing list from Larry Garfield, he said:

And that's before we even run into the long-standing Internals aversion to even recognizing the existence of 3rd party tools for fear of "endorsing" anything. (With the inexplicable exception of Docuwiki.)

I can guess about a lot of the history there, but I think it is time to recognize that the state of the PHP ecosystem in 2024 has come a long way since the more rough-and-tumble days when related projects like Composer were experimental.

So I took the small step of submitting a couple of pull requests to add a chapter about Composer and an example of using it’s autoloader to the documentation.

The PHP documentation should be more inclusive, and I think the best way to make that happen is for me and others to just starting making the contributions. We need to shake off the notion that this is somehow unusual, not choose to say nothing about third-party tools for fear of “favoring” one over the other, and help support the whole PHP ecosystem though its primary documentation.

I would love to add a chapter on static analysis tools. And another one about linting and refactoring tools. Maybe a chapter on frameworks.

None of these have to be long or exhaustive. They only need to introduce the main concepts and give the reader a better sense of what is possible and the grounding to do more research on their own.

A big benefit of putting this sort of information in the documentation is that there are teams of people working on translating the documentation to other languages.

And yes, contributing to the PHP documentation can be kind of tedious because the tooling is pretty baroque. I am happy to help hammer any text that someone writes into the right shape to make it into the documentation, just send me what you have. If you want to do more of the heavy lifting, join the PHP documentation team email list and let’s make more good trouble together.

The rules can matter

I had written a variation of this in a couple of spots now and wanted to put it here, this weird place where I keep writing things:

Organizations with vague rules get captured by people who just fill in the gaps with rules they make up on their own to their own advantage, and then they will continuously find reasons that the “official” rules can’t be fixed because the proposed change is somehow imperfect so they force you to accept the rules they have made up.

The first time I ran into this sort of problem was probably in college being involved in student government, but I haven’t been able to come up with the specifics of what happened that makes me think that it was.

A later instance where I came across it that I remember more vividly was when I was involved with the Downtown Los Angeles Neighborhood Council and how one of the executive board members would squash efforts by citing “standing rules” that nobody could ever substantiate.

More recently, I came across it in looking at discussions of why certain people are or are not allowed to vote on PHP RFCs where the lead developers of one or more popular PHP packages have been shut out because of what I would argue is a misreading of the “Who can vote” section of the Voting Process RFC.

Is this Twig or Jinja? Maybe both!

A project I have been playing around with the last couple of weekends has been making a Python version of this site. The code, which is very rough because I barely know what I’m doing and I’m in the hacking-it-together phase as opposed to trying to make it pretty, is in this GitHub repository.

I am using the Flask framework with SQLAlchemy and Jinja.

I was interested to see if I could just use the same templates as my PHP version, which uses Twig, but there have been a few sticking points:

- The Twig

escapefilter takes an argument to more finely control the context it is being used in so it knows how to escape within HTML, or a URI, or an HTML attribute. Jinja’sescapedoesn’t take an argument. I was able to override it take an extra argument, but mostly ignore it for now. - Jinja doesn’t have Twig’s ternary

?:operator. Not surprising, Python doesn’t either. I rewrote those bits of templates to use slightly more verboseifblocks. - Jinja doesn’t have Twig’s string comparators like

matchesandstarts with. Looks like I can get rid of the need for them, but I just punted on those for now. - Jinja doesn’t have a

block()function. I think I can also avoid needing it. - Jinja’s

url_for()method expects a more Python-ic argument list, likeurl_for('route', var = 'value')but Twig uses a dictionary likeurl_for('route', { 'var' : 'value' }). I was able to override Jinja’s version to handle this, too. - I’ll need to implement versions of Twig’s

date()function and filter.

I had cobbled together a way on the Twig side to let me store some templates (side navigation, the “Hire me!” message on the front page) in the database, so my next trick is going to implement template loaders for both the PHP and Python versions so that is more cleanly abstracted. I have the Python side of that done already.

I hope to eventually create a Rust version of this, too, and it will be interesting to see what new complications using Tera will bring.

Awesomplete is... awesome

An earlier iteration of my blog software had an autocomplete widget for adding tags for when I was writing a post, but somewhere along the line I lost track of that version of the software, and I’ve just been winging it when I added tags to posts since then. Yesterday I ran across a reference to Awesomplete which is a lightweight autocomplete widget by Lea Verou, and now I’ve plugged it into the couple of places where I add tags.

I need to do some more behind-the-scenes work to clean up the tags in the system now, but this should help keep me on track in terms of knowing when I’m actually creating a new tag instead of using an existing one. (Like I could never remember if I have been using the “book” or “books” tag and would always have to look it up.)

Taste the rainbow

When I added the support for dark mode, I had to add a little support to the syntax highlighting styles so they looked okay in both light and dark modes.

Today I went in and worked on the JavaScript code a bit to make it a little more modern and refined the styles a little bit more. I also added the language file for YAML, and built it out so it does a better job of highlighting some of YAML’s more special syntax.

I am sticking with this JavaScript-based syntax highlighting for now, mostly because it works.

Here is a YAML sample pulled from the 1.2.2 spec plus a couple of minor additions to show off additional syntax that is handled.

%YAML 1.2

--- !<tag:clarkevans.com,2002:invoice>

invoice: 34843

date : 2001-01-23

bill-to: &id001

given : Chris

family : Dumars

address:

lines: |

458 Walkman Dr.

Suite #292

city : Royal Oak

state : MI

postal : 48046

ship-to: *id001

product:

- sku : BL394D

quantity : 4

description : Basketball

price : 450.00

- sku : BL4438H

quantity : 1

description : Super Hoop

price : 2392.00

tax : 251.42

total: 4443.52

shipped:

- false

comments:

Late afternoon is best.

Backup contact is Nancy

Billsmer @ 338-4338.Acomplish accomplished

I was perusing some old messages in the archives of the PHP mailing lists, and I stumbled across an email from me where I shared a sample of code as an example of a “natural” language I had used. That’s some real Acomplish code!

[ Declaration of a function to add an item to a list, keeping the list sorted. ]

To insert an item 'the_item' sorted into an item 'the_container':

For each item 'the_place' in the_container,

If the_item < the_place,

Add the_item before the_place.

Return.

Append the_item to the_container.

Make a list named myList of { 2, 3, 7, 14}.

Insert 10 sorted into myList.

Print myList.

[ Prints 2, 3, 7, 10, 14, more or less. ]And another historical bit of trivia in that email: I proposed a for ... in syntax for PHP, although that eventually became foreach ... as.

Looking for photos

I realized the other day that I hadn’t actually wired up the search box in my photo library.

I don’t like how it is a separate search from the rest of search on this site, but that’s a hill to climb another day.

Dr. Brain Thinking Games: IQ Adventure

Dr. Brain Thinking Games: IQ Adventure was one of first two games produced by Knowledge Adventure based on the earlier Dr. Brain games from Sierra Entertainment. KA and Sierra had wound up under the same corporate umbrella, and the “games group” at Knowledge Adventure that I was part of developed them. Our group handled three projects at the time: IQ Adventure, codename “Dime,” Dr. Brain Thinking Games: Puzzle Madness, codename “Nickel,” and the corporate website, codename “Penny.”

IQ Adventure is a third-person isometric puzzle/adventure game which was written in C++, and we used the networking and graphics library from Blizzard Entertainment (another corporate sibling). I was the lead programmer. We did some strangely ambitious things, one of which is that the game levels weren’t just laid out by hand, but we had a map specification language that was used to generate variations of the levels. Here is an example map that I was able to extract from the files on the CD. I couldn’t tell you how it works, really.

During our early prototyping, we did have a way of building environments by hand to test out artwork and the interface. It was just a mode in the game engine that let you “draw” with terrain tiles or place others into the environment. I remember before the team doing the artwork had created our main character, I prototyped with just a little whirling tornado that moved around so I could work on things like the path-finding algorithm.

The whole game was very data-driven. There was a text dialog system that let you interact with the NPC characters that was HTML-inspired. The animations of Dr. Brain giving you instructions were lip-synced using a tool that Knowledge Adventure had developed for their whole line of titles, which meant it was just audio files and frame timings that drove an eight-frame animation set. All of the puzzles and in-game quizzes were rule-based so they would be different on every playthrough. (Sorry to our QA team!)

Here’s a video I found someone playing through one of the levels (or more, I didn’t watch the whole thing).

The multiplayer was pretty simple but I also don’t remember much of the specifics. You could chat with other players, and because this was aimed at younger users there was a basic attempt at filtering out bad words, and I believe all of the chat was logged and someone from the customer service team was assigned to review it regularly, or maybe only when someone complained.

I wish that I still had the source code for the game and even the original media asset sources. In the released game, they were all rendered down to a 256 color palette because that was how things were at the time. I think it would be fairly straightforward to bring the game up on current platforms. You could probably even do it on WebAssembly or something else cross-platform. Unfortunately all of the filenames get lost when extracting the assets from the CD, so even just sorting them out to build something else with them would be pretty tedious. (Then again, the original game may still just work on a more current version of Windows that can run 32-bit apps, since I don’t think there was anything particularly fancy about it.)

I believe that Puzzle Madness was developed in Acomplish, the in-house proprietary multimedia scripting language that I blogged about earlier.

The third game in the series (from KA) , Dr. Brain Thinking Games: Action Reaction, was a first-person puzzle/shooter, and that was partly funded by Intel in their effort to drive adoption of the Pentium processor. It was developed using the Unreal Engine. The bad guys in that game worked for S.P.O.R.E.: Sinister People Organized Really Efficiently, which still makes me laugh. (I am pretty sure the codename for this one was “Quarter” but I didn’t work on it and left the company while it was being developed.)

Kill It with Fire

Kill It with Fire: Manage Aging Computer Systems (and Future Proof Modern Ones) by Marianne Bellotti was recommended to me by a college classmate on a post I made on LinkedIn a while back. (Part of a series of observations about how terrible ZipRecruiter is.)

This book is great, and I would highly recommend it to any software engineer. It’s not only about modernizing software applications, but has a lot of insight into how being a long-lived project is a reasonably likely outcome for any project, and you can save someone in the future from a lot of trouble by making some better decisions up front.

It also leans into the human factors and business realities of how software is developed, something I feel like I have been complaining about here and elsewhere.

Maintenance engineer, slightly used

A popular response to the attempted backdooring of the XZ Utils has been people like Tim Bray talking about the maintenance of open source projects and how to pay for them.

When I transitioned from leading the web development team at MySQL to an engineering position in the server team, I spent the first year as a maintenance engineer. I blogged a little about the results of that one year and calculated that I had fixed approximately one reported bug per working day.

But you’ll also notice that I had to heap some praise on Sergei Golubchik who reviewed fixes for even more bugs than I had fixed. (He also was responsible for working on new features. He is extremely talented, and I’m not surprised to see he’s the chief architect at MariaDB.)

That sort of reviewing and pulling in patches is a critical component of maintaining an open source project, and a big problem is that is not all that fun. Writing code? Fun. Fixing bugs? Often fun. Reviewing changes, merging them in, and making releases? A lot less fun. (Building tools to do that? More fun, and can sidetrack people from doing the less-fun part.)

It is also a lot different for projects with a lot of developers, a small crowd of developers, and just a few developers. The process that a patch goes through to make it into the Linux kernel doesn’t necessarily scale down to a project with just a few part-time developers, and vice versa. A long time ago, I made some noise about how MySQL might want to adopt something that looked more like the Linux kernel system of pulling up changes rather than what was the existing system of many developers pushing into the main tree, and nobody seemed very interested.

Anyway, as people think about creating ways of paying people to maintain open source software, I think it is very important to make sure they don’t inadvertently create a system that bullies existing open source project maintainers to make them focus on the less-fun aspects to developing software, because that’s kind of how we got into this latest mess.

You already see that happening with supposed-to-be-helpful supply chain tools demanding that projects jump through hoops to be certified, or packaging tools trying to push their build configuration into projects (with an extra layer of crypto nonsense), or a $3 trillion dollar company demanding a “high priority” bug fix from volunteers.

I am curious to see where these discussions lead, because there is certainly not one easy solution that is going to work everywhere. It will also be interesting to see how quickly they lose steam as we get some distance from the XZ Utils backdoor experience.

(Also, I’m still looking for work, and I’m willing to do the less-fun stuff if the pay is right.)

But I still haven’t found what I’m looking for

I’m still looking for a job.

It is a new month, so I thought it was a good time to raise this flag again, despite it being a bad day to try and be honest and earnest on the internet.

I wish I was the sort of organized that allowed me to run down statistics of how many jobs I have applied to and how many interviews I have gone through other than to say it has been a lot and very few.

Last month I decided to start (re)developing my Python skills because that seems to be much more in demand than the PHP skills I can more obviously lay claim to. I made some contributions to an open source project, ArchiveBox: improving the importing tools, writing tests, and updating it to the latest LTS version of Django from the very old version it was stuck on. I also started putting together a Python library/tool to create a single-file version of an HTML file by pulling in required external resources and in-lining them; my way of learning more about the Python culture and ecosystem.

That and attending SCALE 21x really did help me realize how much I want to be back in the open source development space. I am certainly not dogmatic about it, but I believe to my bones that operating in a community is the best way to develop software.

I think my focus this month has to be on preparing for the “technical interview” exercises that are such a big of the tech hiring process these days, as much as I hate it. I think what makes me a valuable senior engineer is not that I can whip up code on demand for data structures and algorithms, but that I know how to put systems together, have a broader business experience that means I have a deeper of understanding of what matters, and can communicate well. But these tests seem to be an accepted and expected component of the interview process now, so it only makes sense to polish those skills.

(Every day this drags on, I regret my detour into opening a small business more. That debt is going to be a drag on the rest of my life, compounded by the huge weird hole it puts in my résumé.)

Is GitHub becoming SourceForget v2.0?

Back in the day, open source packages used SourceForge for distribution, issue tracking, and other bits of managing the community around projects but it eventually became a wasteland of neglected and abandoned projects and was referred to as SourceForget.

As I have been poking around at adding Markdown parsing and syntax highlighting to my PHP project, I can’t help but feel like GitHub is taking on some of those qualities.

Parsedown is (was?) a popular PHP package for parsing Markdown, but the main branch hasn’t seen any development in at least five years, and the “2.0” branch appears to have stalled out a couple of years ago. Good luck figuring out if any of the 1,100 forks is where active development has moved.

I think it would be good if more community norms and best practices were developed around the idea of the community of a project being able to take over maintenance when the developer steps away. What’s the solution to the thousands of open issues on GitHub that ask if a project is abandoned?

Here is an issue I found on one project where the developer is trying to hand over more access to community members, and I wonder if a guide to taking your project through that transition would have been valuable to move it along.

Another way this comes up that is very relevant is the assertion put forth in “Redis Renamed to Redict” which really asks the question what moral rights the community has to a project.

(SourceForge also came to be loaded down with advertising and I remember it being kind of a miserable website to use, and as GitHub loads up with “AI” features and feels increasingly clunky to use, it’s just another way I wonder if we are seeing history repeat itself.)

Writing software is fun

Writing software is fun. (For me. Your mileage may vary. But I am not alone in feeling this way.)

This means it is a particularly fraught field for exploitation.

A comparison I would make is to making music. Practically every musical biopic (or fictional version) features the part of the story where the artist (Ray, The One-ders, Elvis, The Dreams, Queen, The Pussycats, etc.) who is creating and/or performing music for their love of creating and performing comes under the influence of someone who sees the potential for money to be made. They have more experience in the business related to the craft, and they use that information asymmetry to exploit the artist.

The business of music has been around quite a bit longer than the business of writing software, and it is still messy and there are constant struggles and upheavals over the rights of artists, how to distribute the money when it gets made, and what sort of gatekeeping goes on within the business.

Seven years ago I pointed out that the games industry was having the same discussions about “crunch time” as 20 years before that. It’s always been a segment of the industry fed on the enthusiasm of people who think writing games is fun.

All of this to say, that as we enter another cycle of software licensing shenanigans in the open source world, I am interested, invested, and extremely tired.

Sometimes I just want to bang on the drums keyboard all day, share that with others, and forget that it is part of this complex ecosystem of people who are coming at it from different angles.

Time to modernize PHP’s syntax highlighting?

This blog post about “A syntax highlighter that doesn't suck” was timely because recently I had been kicking at the code for the syntax highlighter that I use on this blog. It’s a very old JavaScript package called SHJS based on GNU Source-highlight.

I created a Git repository where I imported all of the released versions of SHJS and then tried to update the included language files to the ones from the latest GNU Source-highlight release (which was four years ago), but ran into some trouble. There are some new features to the syntax files that the old Perl code in the SHJS package can’t handle. And as you might imagine, the pile of code involved is really, really old.

That new PHP package seems like a great idea and all, but I really like the idea of leveraging work that other people have done to create syntax highlighting for other languages rather than inventing another one.

On Mastodon, Ben Ramsey brought up a start he had made at trying to port Pygments, a Python syntax highlighter, to PHP.

I ran across Chroma, which is a Go package that is built on top of the Pygments language definitions. They’ve converted the Pygments language definitions into an XML format. Those don’t completely handle 100% of the languages, but it covers most of them.

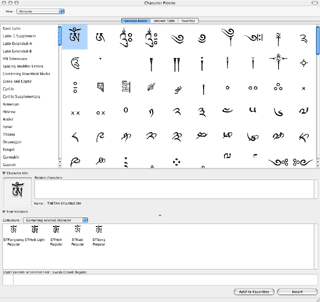

At the end of the day, both GNU Source-highlight and Pygments and variants are built on what are likely to remain imprecise parsers because they are mostly regex-based and just not the same lexing and parsing code actually being used to handle these languages.

PHP has long had it’s own built-in syntax highlighting functions (highlight_string() and highlight_file()) but it looks like the generation code hasn’t been updated in a meaningful way in about 25 years. It just has five colors that can be configured that it uses for <span style="color: #...;"> tags. There are many tokens that it simply outputs using the same color where it could make more distinctions. If it were to instead (or also) use CSS classes to mark every token with the exact type, you could do much finer-grained syntax highlighting.

Looks like an area ready for some experimentation.

Thoughts from SCALE 21x, day 4

Today was the last day of SCALE 21x. Again I didn’t make it out for the opening keynote, and I just took a quick spin around the expo floor to see it looking sort of quiet and winding down.

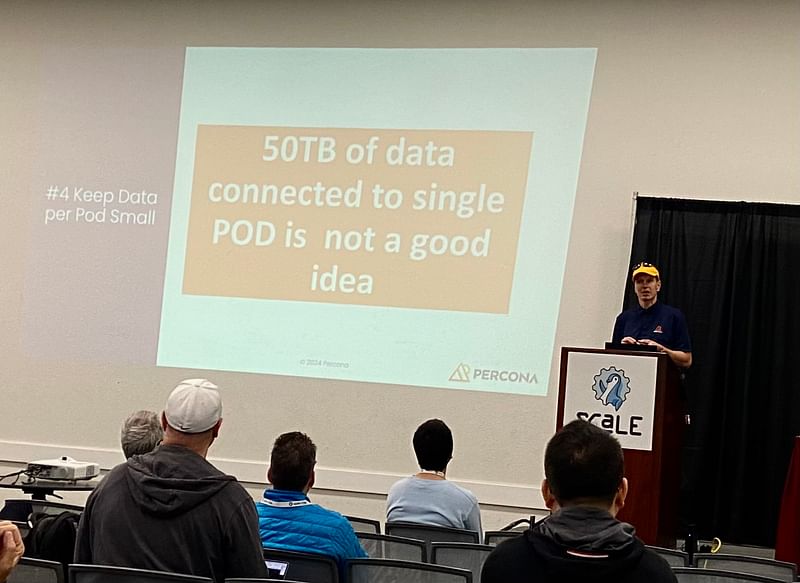

The first talk I attended was Jonathan Haddad on“Distributed System Performance Troubleshooting Like You’ve Been Doing it for Twenty Years” where he shared some of his insights from doing that the title said for companies like Apple and Netflix. His recommendation for greenfield deployments was to have Open Telemetry set up to collect traces and logs, and he was also a big fan of the BPF Compiler Collection (aka bcc-tools) for getting a realtime look into system issues. He was not a fan of running databases in containers, and even less of a fan of running them within Kubernetes. (You could almost see his eye twitch.)

The last talk that I attended (there were just two slots today) was Jen Diamond on “The Git-tastic Power of Conventional Commits.” It was a good talk that used a little light lexical analysis to explain the basic concepts of working with Git (and the revelation that it stands for “ global information tracker” although now a little more research shows that’s only sort-of true). This all led into talking about Conventional Commits which is a way of structuring commit messages, and how you could use that in automations and in driving semantic-versioning in the release process.

The final session was a closing keynote from Bill Cheswick titled “I Love Living in the Future: Half a Century of Computers, Software, and Security” but really could have just been “give the old guy the microphone and let him go!” I left a little over two hours ago, and I wouldn’t be surprised to hear that he’s still going. I hope they let him take a bathroom break.

Thoughts from SCALE 21x, day 3

Another day, another set of thoughts on the experience. It was a busy day at the 21st edition of the Southern California Linux Expo, and the site was more crowded because an episode of America’s Got Talent was being filmed at the Civic Auditorium that is between the two buildings that the conference were held in. If I’d been on the ball, I would have taken a picture of Howie Mandel standing outside his limo.

I will admit that I took my time in the morning and didn’t make it over to Pasadena until after the keynote that kicked off the day.

The first talk that I attended was “Contribution is not only a code.” by Tatiana Krupenya, the CEO of DBeaver. She did a great job of breaking down the many ways that people can contribute to open source development aside from writing code, and I appreciated her final point was that the simplest contributions that anyone can make that will be well-received is just a heart-felt thank you to maintainers of tools that you find valuable.

She also brought up what I am sure is a great talk by Zak Greant from Eclipsecon 2019 titled “When Your Happy Dreams Are About Dying” about burnout in the open source developer community, which I’m looking forward to catching up on.

After that, it was off to Brian Proffitt’s “Measuring the Impact of Community Events” where he provided his perspective from his roles at the Red Hat OSPO, Apache Software Foundation, and other places. It was a great companion to the first session, but more from the perspective of why companies and projects may want to think about measuring how they engage with the community.

I took another spin through the expo during what was supposed to be the lunch break, picked up my conference T-shirt and a free bucket hat from AWS.

After lunch, Tyler Menezes from CodeDay spoke about “Nurturing the Next Generation of Open Source Contributors” and how the non-profit he founded works to connect high school and college students from underprivileged backgrounds with resources to help them thrive in tech. One of the programs pairs small teams of students with a mentor to help them make a contribution to an open source project, and it sounds amazing. I plan to find a way to get involved once I have some my employment situation sorted out.

For the next talk was Heather Osborn on “Organic isn't always good for you” which was sort of a case study of her experience as a DevOps leader tackling the complicated environment that had taken root place at the startup she was working at, and how they figured out a strategy to straighten that out. It was really interesting to hear the language she used about convincing the company management to buy into the plan, which seemed more adversarial and dismissive than the working environments that I’ve been in.

“Solving ‘secret zero’, why you should care about SPIFFE!” by Mattias Gees was by far the most technical talk that I attended today. Like the presentation on Presto yesterday, it seemed a bit like the sort of system that is very impressive and I will probably never need.

The last talk I attended was Michael Gat on “Anti-Patterns in Tech Cost Management” which was pretty true to the title. It was a little light on the open source aspect, but there were definitely insights there on the importance of laying the groundwork early for being able to do cost analytics on systems you’ll be scaling. There were three or so questions from people that started with “I’m an engineer, and ...” which I thought was great. I think what bothered me about Heather Osborn’s talk was how it implied a certain distaste for connecting the engineering to the business realities, and I think it is very important for engineers to understand, and have respect for, business decision-making.

One more day to go. I am surprised how heavy the program is on cloud computing and DevOps, but I guess that’s a huge chunk of what people are working on these days. What I have been missing from the talks so far is programming-focused talks.

Thoughts from SCALE 21x, day 2

The second day of the Southern California Linux Expo meant the start of the expo, and the more talks.

I started the day with “Best Practices for Running Databases on Kubernetes” with Peter Zaitsev, who was a coworker at MySQL and went on to found Percona. While I am getting a better sense of what Kubernetes is all about and already had some idea of how databases might exist in that world, his talk was a great overview and the “best practices” seemed to cover a lot of bases.

That was followed by “Kubernetes and Distributed SQL Databases: Same Consistency With Better Availability and Scalability” which showed off using Kine as a way to plug in different systems as the data store for Kubernetes instead of etcd. I wish the speaker had spent a little more time giving some practical examples of why is something you would even want to do. It was a good reminder that k3s exists and I should play around with it. And the speaker just using an outline in an open text editor (Pico!) as his slides reminded me of when I gave a talk on MySQL and PHP using plain-text slides. (Looks like my talk has been disappeared, though.)

After that, it was back over to the other side of the expo for a talk on “Leveraging PrestoDB for data success” which was an overview of the Presto project, which provides an ANSI SQL query interface to a collection of other data sources (my paraphrase). Kiersten Stokes, the presenter who works at IBM, called MySQL a “traditional database” which struck me as funny. Presto is a very slick and powerful system that I will probably never need. I appreciate that everyone I have seen talk about the concept of a “data lakehouse” is appropriately embarrassed about the name.

Before the next round of talks started, the expo floor finally opened, so I took a quick spin through that. It was pretty busy, and seemed like a good crowd of projects and companies. I think the largest footprint was maybe a couple of 10' × 40' booths from companies like AWS and Meta, but otherwise it was a lot of 10' × 10' booths with a couple of people handing out stickers or other promotional items from behind a table (and talking about their projects/companies).

After that I went back to the MySQL track (four talks!) to see “Design and Modeling for MySQL” which was really more of a speed-run of database history and concepts. The presenter made the classic mistake of white text on a dark background so it was pretty tough to see what he was showing until someone dimmed the lights.

That was followed by “Beyond MySQL: Advancing into the New Era of Distributed SQL with TiDB” from Sunny Bains, whose time as the MySQL/InnoDB team overlapped my time working at MySQL, but I don’t think we ever met. TiDB seems like a very impressive cloud-native distributed database which doesn’t actually derive from MySQL, but instead has chosen to be protocol and query-language compatible.

The last session I attended was a panel from the Open Government track on “The OSPO POV.” OSPO stands for “Open Source Program Office” and can act as kind of the interface between companies or organizations and the open source world. There were a bunch of projects and communities mentioned that I want to look into further: TODO Group, Fintech Open Source Foundation, CHAOSS (Community Health Analytics in Open Source Software), Sustain, The Open Source Way, Inner Source Commons, and OSPO++.

Things got busier today, which was nice to see. I wasn’t in a great headspace most of the day, which pretty much sucked, but I think I came away with a lot of things to dig into on my own, which is one of the reasons I wanted to attend.

How I use Docker and Deployer together

I thought I’d write about this because I’m using Deployer in a way that doesn’t really seem to be supported.

After the work I’ve been doing with Python lately, I can see how I have been using Docker with PHP is sort of comparable to how venv is used there.

On my production host, my docker-compose setup all lives in a directory called tmky. There are four containers: caddy, talapoin (PHP-FPM), db (the database server), and search (the search engine, currently Meilisearch).

There is no installation of PHP aside from that talapoin container. There is no MySQL client software on the server outside of the db container.

I guess the usual way of deploying in this situation would be to rebuild the PHP-FPM container, but what I do is just treat that container as a runtime environment and the PHP code that it runs is mounted from a directory on the server outside the container.

It’s in ${HOME}/tmky/deploy/talapoin (which I’ll call ${DEPLOY_PATH} from now on). ${DEPLOY_PATH}/current is a symlink to something like ${DEPLOY_PATH}/release/5.

The important bits from the docker-compose.yml look like:

services:

talapoin:

image: jimwins/talapoin

volumes:

- ./deploy/talapoin:${DEPLOY_PATH}This means that within the container, the files still live within a path that looks like ${HOME}/tmky/deploy/talapoin. (It’s running under a different UID/GID so it can’t even write into any directories there.) The caddy container has the same volume setup, so the relevant Caddyfile config looks like:

trainedmonkey.com {

log

# compress stuff

encode zstd gzip

# our root is a couple of levels down

root * {$DEPLOY_PATH}/current/site

# pass everything else to php

php_fastcgi talapoin:9000 {

resolve_root_symlink

}

file_server

}(I like how compact this is, Caddy has a very it-just-works spirit to it that I dig.)

So when a request hits Caddy, it sees a URL like /2024/03/09, figures out there is no static file for it and throws it over to the talapoin container to handle, giving it a SCRIPT_FILENAME of ${DEPLOY_PATH} and a REQUEST_URI of /2024/03/09.

When I do a new deployment, ${DEPLOY_PATH}/current will get relinked to the new release directory, the resolve_root_symlink from the Caddyfile will pick up the change, and new requests will seamlessly roll right over to the new deployment. (Requests already being processed will complete unmolested, which I guess is kind of my rationale for avoiding deployment via updated Docker container.)

Here is what my deploy.php file looks like:

<?php

namespace Deployer;

require 'recipe/composer.php';

require 'contrib/phinx.php';

// Project name

set('application', 'talapoin');

// Project repository

set('repository', 'https://github.com/jimwins/talapoin.git');

// Host(s)

import('hosts.yml');

// Copy previous vendor directory

set('copy_dirs', [ 'vendor' ]);

before('deploy:vendors', 'deploy:copy_dirs');

// Tasks

after('deploy:cleanup', 'phinx:migrate');

// If deploy fails automatically unlock.

after('deploy:failed', 'deploy:unlock');Pretty normal for a PHP application, the only real additions here are using Phinx for the data migrations and using deploy:copy_dirs to copy the vendors directory from the previous release so we are less likely to have to download stuff.

That hosts.yml is where it gets tricky, because when we are running PHP tools like composer and phinx, we have to run them inside the talapoin container.

hosts:

hanuman:

bin/php: docker-compose -f "${HOME}/tmky/docker-compose.yml" exec --user="${UID}" -T --workdir="${PWD}" talapoin

bin/composer: docker-compose -f "${HOME}/tmky/docker-compose.yml" exec --user="${UID}" -T --workdir="${PWD}" talapoin composer

bin/phinx: docker-compose -f "${HOME}/tmky/docker-compose.yml" exec --user="${UID}" -T --workdir="${PWD}" talapoin ./vendor/bin/phinx

deploy_path: ${HOME}/tmky/deploy/{{application}}

phinx:

configuration: ./phinx.ymlNow when it’s not being pushed to an OCI host that likes to fall flat on its face, I can just run dep deploy and out goes the code.

I’m also actually running Deployer in a Docker container on my development machine, too, thanks to my fork of docker-deployer. Here’s my dep script:

#!/bin/sh

exec \

docker run --rm -it \

--volume $(pwd):/project \

--volume ${SSH_AUTH_SOCK}:/ssh_agent \

--user $(id -u):$(id -g) \

--volume /etc/passwd:/etc/passwd:ro \

--volume /etc/group:/etc/group:ro \

--volume ${HOME}:${HOME} \

-e SSH_AUTH_SOCK=/ssh_agent \

jimwins/docker-deployer "$@"Anyway, I’m sure there are different and maybe better ways I could be doing this. I wanted to write this down because I had to fight with some of these tools a lot to figure out how to make them work how I envisioned, and just going through the process of writing this has led me to refine it a little more. It’s one of those classic cases of putting in a lot of hours to end up with a relatively few lines of code.

I’m also just deploying to a single host, deployment to a real cluster of machines would require more thought and tinkering.

Release early, release often

One of the benefits of starting Frozen Soup from a project template is that someone very smart (Simon) has done all the heavy lifting to make publishing it into the Python ecosystem really easy to do. So after I added a new feature today (pulling in external url(...) references in CSS inline as data: URLs), I went ahead and registered the project on PyPI, tagged the release on GitHub, and let the GitHub Actions that were part of the project template do the work of publishing the release. It worked on the first try, which is lovely.

I pushed more changes after I did that release, adding a way to set timeouts and fixing the first issue (that I also filed) about pre-existing data: URLs getting mangled. I also added a quick-and-dirty server version which allows for getting the single-file HTML version of a page, and makes it a little easier to play around with the single-file version of live URLs without having to deal with saving and opening the files.

So I did a second release.

Introducing Frozen Soup

I made a new thing, which I decided to call Frozen Soup. It creates a single-file version of an HTML page by in-lining all of the images using data: URLs, and pulling in any CSS and JavaScript files.

It is loosely inspired by SingleFile which is a browser extension that does a similar thing. There are also tools built on top of that which let you automate it, but then you’re spinning up a headless browser, and it all felt very heavyweight. The venerable wget will also pull down a page and its prerequisites and rewrite the URLs to be relative, but I don’t think it has a comparable single-file output.

This may also exist in other incarnations, this is mostly an excuse for me to practice with Python. As such, it is a very crude first draft right now, but I hope to keep tinkering with it for at least a little while longer.

I have also been contributing some changes and test cases to ArchiveBox, but this is different yet also a little related.

Grinding the ArchiveBox

I have been playing around with setting up ArchiveBox so I could use it to archive pages that I bookmark.

I am a long-time, but infrequent, user of Pinboard and have been trying to get in the habit of bookmarking more things. And although my current paid subscription doesn’t run out until 2027, I’m not paying for the archiving feature. So as I thought about how to integrate my bookmarks into this site, I started looking at how I might add that functionality. Pinboard uses wget, which seems simple enough to mimic, and I also found other tools like SingleFile.

That’s when I ran across mention of ArchiveBox and decided that would be a way to have the archiving feature I want and don’t really need/want to expose to the public. So I spun it up on my in-home server, downloaded my bookmarks from Pinboard, and that’s when the coding began.

ArchiveBox was having trouble parsing the RSS feed from Pinboard, and as I started to dig into the code I found that instead of using an actual RSS parser, it was either parsing it using regexes (the generic_rss parser) or an XML parser (the pinboard_rss parser). Both of those seemed insane to me for a Python application to be doing when feedparser has practically been the gold standard of RSS/Atom parsers for 20 years.

After sleeping on it, I decided to roll up my sleeves, bang on some Python code, and produced a pull request that switches to using feedparser. (The big thing I didn’t tackle is adding test cases because I haven’t yet wrapped my head around how to run those for the project when running it within Docker.)

Later, I realized that the RSS feed I was pulling of my bookmarks would be good for pulling on a schedule to keep archiving new bookmarks, but I actually needed to export my full list of bookmarks in JSON format and use that to get everything in the system from the start.

But that importer is broken, too. And again it’s because instead of just using the json parser in the intended way, there was a hack to work around what appears to have been a poor design decision (ArchiveBox would prepend the filename to the file it read the JSON data from when storing it for later reading) that then got another hack piled on top of it when that decision was changed. The generic_json parser used to just always skip the first line of the file, but when that stopped being necessary, that line-skipping wasn’t just removed, it was replaced with some code that suddenly expected the JSON file to look a certain way.

Now I’ve been reading more Python code and writing a little bit, and starting to get more comfortable some of the idioms. I didn’t make a full pull request for it, but my comment on the issue shows a different strategy of trying to parse the file as-is, and if that fails, skip the first line and try it again. That should handle any JSON files with garbage in the first line, such as what ArchiveBox used to store them as. And maybe there is some system out there that exports bookmarks in a format it calls JSON that actually has garbage on the first line. (I hope not.)

So with that workaround applied locally, my Pinboard bookmarks still don’t load because ArchiveBox uses the timestamp of the bookmark as a unique primary key and I have at least a couple of bookmarks that happen to have the same timestamp. I am glad to see that fixing that is project roadmap, but I feel like every time I dig deeper into trying to use ArchiveBox it has me wondering why I didn’t start from scratch and put together what I wanted from more discrete components.

I still like the idea of using ArchiveBox, and it is a good excuse to work on a Python-based project, but sometimes I find myself wondering if I should pay more attention my sense of code smell and just back away slowly.

(My current idea to work around the timestamp collision problem is to add some fake milliseconds to the timestamp as they are all added. That should avoid collisions from a single import. Or I could just edit my Pinboard export and cheat the times to duck the problem.)

Oracle Cloud Agent considered harmful?

Playing around with my OCI instances some more, I looked more closely at what was going on when I was able to trigger the load to go out of control, which seemed to be anything that did a fair amount of disk I/O. What quickly stuck out thanks to htop is that there were a lot of Oracle Cloud Agent processes that were blocking on I/O.

So in the time-honored tradition of troubleshooting by shooting suspected trouble, I removed Oracle Cloud Agent.

After doing that, I can now do the things that seemed to bring these instances to their knees without them falling over, so I may have found the culprit.

I also enabled PHP’s OPcache and some rough-and-dirty testing with good ol’ ab says I took the homepage from 6r/s to about 20r/s just by doing that. I am sure there’s more tuning that I could be doing. (Requesting a static file gets about 200 r/s.)

By the way, the documentation for how to remove Oracle Cloud Agent on Ubuntu systems is out of date. It is now a Snap package, so it has to be removed with sudo snap remove oracle-cloud-agent. And then I also removed snapd because I’m not using it and I’m petty like that.

Fall down, go boom

I am either really good at making Oracle Cloud Infrastructure instances fall over, or the VM.Standard.E2.1.Micro shape is even more under-powered than I expected. I had been using the Ubuntu “minimal” image as my base, so I thought I would try the Oracle Linux 8 image and I couldn’t even get it to run yum check-update without that process getting killed. That seems like a less-than-ideal experience out of the box.

What seems to happen on the instances (with Ubuntu) that I am using to host this site is that if something does too much I/O, the load average spikes, and things slowly grind through before recovering. The problem is that something like “running composer” seems to be too much I/O, which makes it awkward to deploy code.

Another thing that seems to get out of control quickly is when I reindex the site with Meilisearch. Considering there is very little data being indexed, that obviously shouldn’t be causing any sort of trouble. I have two instances spun up now, so I can play with the settings on one without temporarily choking off the live site. It’s probably just a matter of setting the maximum indexing memory in Meilisearch’s configuration or constraining the memory on that container.

I also added a OCI Flexible Network Load Balancer in front of my instance so I can quickly switch things over to another without waiting on any DNS propagation. Maybe if Ampere instances ever become available in my region I will play around with splitting the deployment across multiple instances.

Coming to you from OCI

After some fights with Deployer and Docker, this should be coming to you from a server in Oracle Cloud Infrastructure. There are still no Ampere instances available, so it is what they call a VM.Standard.E2.1.Micro. It seems be underpowered relative to the Linode Nanode that it was running on before, or maybe I just have set things up poorly.

But having gone through this, I have the setup for the “production” version of my blog streamlined so it should be easy to pop up somewhere else as I continue to tinker.

Docker, Tailscale, and Caddy, oh my

I do my web development on a server under my desk, and the way I had it set up is with a wildcard entry set up for *.muck.rawm.us so requests would hit nginx on that server which was configured to handle various incarnations of whatever I was working on. The IP address was originally just a private-network one, and eventually I migrated that to a Tailscale tailnet address. Still published to public DNS, but not a big deal since those weren’t routable.

A reason I liked this is because I find it easier to deal with hostnames like talapoin.muck.rawm.us and scat.muck.rawm.us rather than running things on different ports and trying to keep those straight.

One annoyance was that I had to maintain an active SSL certificate for the wildcard. Not a big deal, and I had that nearly automated, but a bigger hassle was that whenever I wanted to set up another service it required mucking about in the nginx configuration.

Something I have wanted to play around with for a while was using Tailscale with Docker to make each container (or docker-compose setup, really) it’s own host on my tailnet.

So I finally buckled down, watched this video deep dive into using Tailscale with Docker, and got it all working.

I even took on the additional complication of throwing Caddy into the mix. That ended up being really straightforward once I finally wrapped my head around how to set up the file paths so Caddy could serve up the static files and pass the PHP off to the php-fpm container. Almost too easy, which is probably why it took me so long.

Now I can just start this up, it’s accessible at talapoin.{tailnet}.ts.net, and I can keep on tinkering.

While it works the way I have it set up for development, it will need tweaking for “production” use since I won’t need Tailscale.

My history with programming languages

I think I have always had an interest in playing around with different programming languages. My first probably would have been Applesoft BASIC. In elementary school, I remember writing a bouncing-lines graphic toy more than once, and D&D character generators.

I am really not sure what came next, but it was probably mostly things like DOS batch files (and eventually 4DOS batch files).

I wrote some little utilities for DESQview in x86 assembly and even released them as freeware, but I haven’t been able to track them down again.

The first substantial project I did was probably the billing and reporting system for my dad’s company that was written in FoxPro before it was even acquired by Microsoft. This would have been where I first encountered SQL, too.

All of this would have been essentially self-taught. I vaguely remember participating in some computer classes or clubs, but nothing terribly structured. I’m sure that I was exposed to Pascal, probably Turbo Pascal, at some point in those.

One memory from my freshman year at college is that I used the (* multi-line syntax *) of Pascal comments on an assignment instead of the { single-line syntax } and the grader for the assignment had some sort of reaction about that.

I was exposed to a lot of programming languages in college, especially because I had to take the “Programming Languages” class twice after failing it the first time. (I had a rough time in my sophomore year.) It was taught by different professors who used different languages to teach the concepts. Some languages I remember doing at least an assignment or two in: C, Fortran, ML, Scheme, Lisp, Perl, COBOL, Ada, and APL. Part of Harvey Mudd College’s program is a capstone project in your senior year where you work on a project for an outside company or organization. The project I was part of was a wrote a tool for doing internationalization for voicemail prompts for Octel Communications using Visual Basic.

My first job after college was for an educational/games software company named Knowledge Adventure (KA) on a project that was released as “Steven Spielberg’s Director’s Chair.” At the time, all of the projects at the company were done using an in-house programming language named “Acomplish” (or maybe “Accomplish”) which was short for “A Computer English.” I have been trying to dig up some reference to or examples of it and have come up empty so far, but it was a natural-language-ish syntax that had originally been intended for producers to write in. By the time I worked there it was mostly being used by programmers who graduated from Mudd, CalTech, and UCLA, which is kind of funny.

It was while working at KA working on their website that I got involved in the early days of PHP. I guess here is where someone could make jokes about how obviously someone who had failed “Programming Languages” had a hand in PHP, but I don’t recall that I had very much, if any, involvement in the actual design of the language. (Although I remember having to write way too many emails to make that case that casting a string to an integer should not interpret it the same way as numeric literals because people would have brought out pitchforks when leading zeroes caused them to be interpreted as octal values.)

At KA, the second title I worked on (what was released as “Dr. Brain’s Thinking Games: IQ Adventure”) was coded in C/C++ after we had done some initial prototyping in Acomplish. The game had a multiplayer component and we ended up borrowing the graphics and networking library from our (at the time) sister company, Blizzard Entertainment. (I later went on to have the worst programming interview experience of my life, so far, there.)

When I left KA they were in the process of adopting a new engine for all of their projects that was Java-based, or maybe just evaluating it. In any case, I never ended up working with that engine but I did a small contract project around this time that was a Java applet. (It was for the website for a show on NBC.)

My next job was at HomePage.com, which was an idealab startup that was basically a second-generation GeoCities. (A number of the folks in the management team were actually ex-GeoCities people who cashed out when that was acquired by Yahoo.) We built our system in Perl (with mod_perl), and built a sort of primitive HTML templating system called Gear. The original parser was regex-based until one of the other senior engineers wrote a proper lexer and parser for it.

I’m not sure when I first started working in JavaScript, but it was probably somewhere in this period.

After HomePage.com, I ended up working for MySQL Ab leading the web team, which meant back to writing a bunch of PHP code. And I am sure I had encountered Python before this, but recently I dug up something I wrote during this time in Python that connected to our bugs database, announced new bugs, and could be queried in a few ways. During the rest of my time at MySQL, I did more C/C++ programming, probably more Perl, and even did a tiny bit of work with Ruby.

Other programming languages I have played with at some time or another: Logo, MATLAB, OCaml, Tcl, Dylan, Oberon, Modula, and Delphi (Object Pascal).

Most recently I have been playing around with Rust and Go, and done some reading on Swift.

This whole train of thought was actually triggered by seeing Delphi mentioned in a job description and it reminded me of how intrigued I was when I first encountered it. I have a soft spot for Pascal and the successors.

Poking around with Rust

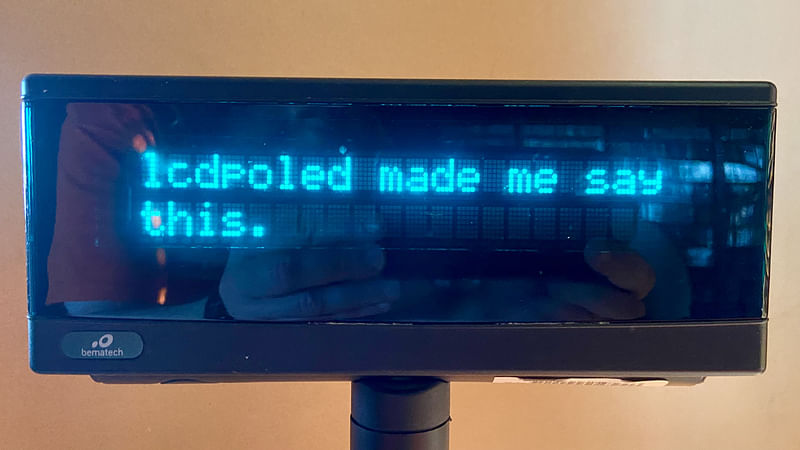

A long time ago, I implemented a quick-and-dirty daemon in C that used the vendor’s support library to display messages on an LCD pole display at the store. The state of California requires that electronic point-of-sale systems have a customer-facing display, and this fulfilled that.

It was very simple, it just listened for TCP connections and displayed the text it was sent. Every 15 seconds it would reset the display to a hardcoded default message. When an item was added to an invoice in Scat POS, it would push the name and price to the daemon. It also pushed the total when payment was initiated.

Like I said, it was quick and dirty, and we used it for a decade and I never really got around to doing any of the basic improvements that I wanted to do, like being smarter about when to go back to the default display.

The LCD display was one of the things we didn’t manage to sell off when we closed down the store, so I took it home and now it’s on my desk, hooked up to the Raspberry Pi 4 that used to be our print server. I decided to use it as an excuse to start learning Rust.

I pulled out one of the examples from the code for a crate that wraps libusb to provide access to USB devices, hit it with a hammer until I got it to push text to the display, and now I have the basis to re-implement what I had before and then give it the polish that I never did. Maybe implement a more user-friendly way of sending the various control codes from the user manual for doing things like clearing the screen.

That’s the theory, at least. The reality is that first I had to migrate all of my photos from Flickr to my own service, implement a way to add new photos to the collection, and then upload the photo I took of the display showing a simple message so I could blog about it.

And I am not sure if doing more with this is actually the next thing I’ll tackle, but writing about what I had done so far is at least something to check off the to-do list that I don’t have.

The code lives in the lcdpoled repository on GitHub. (The old C code is now off on a different branch.)

More syndication

Now that Bluesky is available without a waitlist and has a web interface, I have been playing around with it a little more. So in that spirit, posts here will get posted there just like I’ve been doing for Mastodon.

I’m just using this PHP interface for Bluesky because it was what I ran across first, but I probably should use Ben Ramsey’s socialweb/atproto which looks like a more rigorous implementation of the underlying AT protocol.

Anyway, it’s hacked together for now, and the very few followers I have over on Bluesky will now perhaps find these things in their feed.

You can even find links to the posts on Mastodon and Bluesky in the details of the entries here. One of my plans is to eventually pull in replies on either of those as comments here based on jwz’s hack for doing that with WordPress.

Sometimes the hammer is too big

Elasticsearch is something I have seen pop up in a lot of job listings, so I decided to play around with it to see if I could use it for the search on this site. I was able to get it set up fairly easily on my development server and shift over to using it there, but when I tried to bring it up on my production server, I ran into the problem that it is more resource-hungry than it can handle. This all runs on a Nanode, which is Linode’s tiniest virtual server that just has 1GB of RAM.

Right now I am using Sphinx which has been fine, but it hasn’t really been open source for quite a while.

I was going to try playing around with ZincSearch next. I was digging into it and it certainly sounds similar to what I want (a “lightweight alternative to Elasticsearch that requires minimal resources”) but it isn’t clear how active the project is or what sort of future it has. The documentation for ZincSearch is pretty but kind of scant. Looking into the search types it supports, I was left with questions about what syntax the querystring type actually supported. So I looked at the code (which is slow reading since I am still fairly unfamiliar with Go), which I followed to the underlying bluge indexing library, which has even less documentation. I finally figured out that bluge was forked from bleve (which seems like something that would be nice to mention in the README for bluge, but whatever). Bleve has the query string format documented. (Whew.)

But after all of that digging, I’m less certain about how much time I want to put into playing with ZincSearch since the underpinnings and their future seem shaky.

Typesense or Meilisearch is on my list to give a try next.

But in case it isn’t obvious, the things I have been digging into are sort of scattered right now.

Now the photos live here

I’d call it maybe an alpha release, but a very basic version of my photo library is now up and running. There is one last picture I need to migrate over from my old Flickr presence, but otherwise they should all have made it over. I should look at pulling in the photos from my Instagram account. The real test of things would be to load my iCloud photo library, but that is about 25,000 pictures and I’d certainly have to go through to see what could be public. It would probably be better to figure out how I want to get photos into the library going forward, and then I can back-fill photos from before this year.

Just implementing this has made me think a lot about how my system here is structured, how the data is structured, and how I want to structure it. I am trying to move towards adopting more of the IndieWeb principles and standards.

Enabling GD’s JPEG support in Docker for PHP 8.3

I am generating a ThumbHash for each photo in my new photo library using this PHP library, and it needs to use either the GD Graphics Library extension or ImageMagick to decode the image data to feed it into the hash algorithm.

The PHP library recommends the ImageMagick extension (Imagick) because GD still doesn’t support 8-bit alpha values, but I ran into the bug that prevents Imagick from building that is fixed by this patch that hasn't been pulled into a released version yet. Then I realized that none (or close to none) of the images I’d be dealing with use any sort of transparency, so GD would be fine. And it was already enabled in my Dockerfile, so I should have been good to go.

But it turns out that although I thought I had included GD, I hadn’t actually properly enabled JPEG support in GD, so the ThumbHash library’s helper function to extract the image data it needed just failed on a call to ImageSX() after ImageCreateFromString had failed. (Here is a pull request to SRWieZ/thumbhash to throw an exception on that failure, which would have saved me a few steps of debugging.)

Looking at the code for the GD extension, that should not have been a silent failure, so some digging may be required to figure out what happened with that. I may have just missed that particular error message in the logs.

Enabling JPEG support is fairly simple, although a lot of the instructions I found online were a little out of date. The important thing was that I needed to add this to my Dockerfile between installing the development packages and building the PHP extensions: docker-php-ext-configure gd --with-freetype --with-jpeg.

So now I can successfully generate a ThumbHash for all of my photos, except for another bug I haven’t tracked down yet where it sometimes produces a hash that is longer than expected. The ThumbHash for this photo is 2/cFDYJdhgl3l2eEVMZ3RoOkD1na which can be turned directly into this image:

Some notes on Flickr data migration

I decided to stop renewing my subscription with Flickr recently to create some incentive for me to self-host my photos and integrate them more closely here. Before my subscription lapsed, I requested an archive of all of my Flickr data, and now I am finally getting around to working with the data.

When you download your Flickr data it includes JSON files named like photo_50626142.json and JPEG files named like young-mimes_50626142_o.jpg and the name of the JPEG is not in the JSON data.

You can generate it, probably, using the name and ID but I’m not sure what the rules are for turning the name field into the snake-case form.

Except that images without a name have JPEG files named like 17483805680_f57f81feb5_o.jpg. The id is at the beginning, the other bit is just random or something. (Looks like this is the same filename used for the original URL in the JSON.)

The way to go seems to be just matching on the ID embedded in the filename. (That’s what the one other tool I’ve seen that uses the export data does.)

And when working through all of this, I found that I must have not downloaded one of the archive files from Flickr, because I was missing 83 JPEG files. I was able to use the JSON files to rescue them.

Now that I know that I actually have all of the data and all of the images are in a Backblaze B2 bucket fronted by Gumlet, the next step will be loading all of the relevant metadata into a database table and then wiring up some ways to browse the images here.

Where to put routing code

I won’t make another Two Hard Things joke, but an annoying thing in programming is organizing code. Something that bothered me over the years as I was developing Scat POS is that adding a feature that exposed new URL endpoints required making changes in what felt like scattered locations.

In the way that Slim Framework applications seem to be typically laid out, you have your route configuration in one place and then the controllers (or equivalent) live off with your other classes. Slim Skeleton puts the routes in app/routes.php and your controller will live somewhere down in src. Scat POS started without using a framework, and then with Slim Framework 3, so the layout isn’t quite the same but it’s pretty close. The routes are mostly in one of two applications, app/pos.php or app/web.php, and then the controllers are in lib.

So as an example, when I added a way to send a text message to a specific customer, I had to add a couple of routes in app/pos.php as well as the actual handlers in lib.

(This was an improvement over my pre-framework days where setting up new routes could involve monkeying with Apache mod_redirect configuration.)

Finally for one of the controllers, I decided to move the route configuration into a static method on the controller class and just called that from within a group. Here is commit adding a report that way, which didn’t have to touch app/pos.php.

Just at quick glance, it looks like Laravel projects are set up more like the typical Slim Framework project with routes off in one place that call out to controllers. Symfony can use PHP attributes for configuring routes in the controller classes, which seems more like where I would want to go with this thinking.

I am not sure what initially inspired me to start using Slim Framework but if it seems like I am doing things the hard way sometimes, that is sort of intentional. On a project like this where I was the only developer, it was a chance to explore ideas and learn new concepts in pretty small chunks without having to buy in to a large framework. If I were to start the project fresh now, I might just use Symfony and find other new things to learn (like HTMX). If I had needed to hand off development of Scat POS to someone else, I would have needed to spend some time making things more consistent so there weren’t multiple places to look for routes, for example.

And as a side note, going back to that commit adding SMS sending to customers, you can see a bit of the interface I had to for popping up dialogs. It used Bootstrap modal components because it pre-dates browser support for <dialog>. The web side of Scat POS actually evolved that to use browser-native dialogs (as a progressive enhancement) because I had rebuilt it all more recently and that side no longer used Bootstrap.

The joy of open source

As I mentioned, one of the reasons I was trying to get organized in setting up my development environment under chezmoi was because I was wanted to start using Atuin, which does what it claims in making the shell magical. It stores and syncs the shell history (in an SQLite database behind-the-scenes), and while I have only just started using it, it seems pretty great. Before starting with it, I just had the bash history set up on my primary development machine to never expire, so there was six years of history there (now imported into Atuin).

But Atuin is fairly new, and I ran into some rough edges. One is that because I’m still using the bundled bash on macOS, and it is a very old version of bash, not everything works correctly (accessing the history by up-arrow just errors out). This has been fixed in the Atuin repo, so it should no longer be a problem after they roll out their next release.

As I finished integrating Atuin into my chezmoi setup, I noticed that it seemed to no longer be updating the history on my Linux box. When I went into debugging it, I finally found that I was loading two different versions of bash-preexec. One was being loaded by my chezmoi setup (where it is set up to download to ~/.bash-preexec.sh in my .chezmoiexternal.toml), and the other was being loaded by /etc/profile.d/wezterm. My version was 0.5.0, the version that WezTerm was loading was 0.4.1.

Between those two versions, bash-preexec decided to change the variable that they use to prevent double-inclusion, but the implementation was sort of one-way: the new version would set both the old and new variables, but only check the new one. So if you loaded the old one first, the new one would still load and (apparently) not work correctly.

I let Wez know that he was bundling an old version, which he promptly updated (and so it’s fixed in the nightly builds now). I also submitted a patch to bash-preexec to pay attention to the old guard variable, so whenever they do a new release that particular problem won’t bite anyone else. (They will face a new one, where having an old version of bash-preexec loaded may prevent the newer version from loading, but that should be relatively straightforward to figure out.)

This journey brought to mind an experience I had at HomePage.com about 25 years ago. We had a fleet of front-end web servers that all accessed user storage on an NFS server (an F5 box) and we were running into trouble where the Linux machines would periodically get stuck or die. It was an all-hands-on-deck situation trying to figure it out, and I eventually hit enough things with hammers to figure out that it was a bug in Linux NFS and there was a fix in a later kernel version that I could just back port and suddenly our Linux machines were stable. I pointed out the fix that could be back ported to Alan Cox, then maintainer of the stable Linux kernel. It’s possible they were pulling my leg about it, but the marketing/PR people at the company talked about putting out a press release about how I had fixed a bug in NFS on Linux. I found the whole idea embarrassing and felt this was all just part of the normal open source process.

(Small side note: The company ended up migrating the front-end servers to FreeBSD, which had been a parallel investigation to me debugging the NFS problem.)

This is the sort of mess I enjoy digging my way out of, and it is generally more fun to do this in the open source world than in some company’s proprietary codebase.

Another tool I plan to explore is ble.sh which is recommended by Atuin. The entire idea of line-editing and syntax highlighting built entirely in shell code sounds ludicrous.

Setting up house with chezmoi

It has been a long time since I was the sort of developer who fine-tuned his setup to be just so.

The .vimrc on my development machine is less than 50 lines long. And a big chunk of that is a function that I don’t remember adding and I’m pretty sure I never used.

I made the small change to using WezTerm instead of the stock Mac OS X terminal but not for any particularly exciting reason. My configuration is very barebones, just changing the font, color scheme, and setting it up a very simple window title.

I figured it was time to get more organized, so one of the first things I’ve done is set up chezmoi to manage my configuration files. I want to start playing around with at least a slightly more sophisticated and consistent setup, and add things like Atuin into the mix.

Titles are where I can be abstruse

When I added auto-posting of entries here to my Mastodon account, it just took the title of the entry and posted that along with the link. But those end up being kind of cryptic, so now I made it possible to actually write the Mastodon post alongside the blog entry.

Just a small step in building out this online presence more fully and making sure it’s connected to other places more thoughtfully.

The next thing I want to do is build a new home for my photos. I stopped renewing my Flickr Pro subscription. I’ve thought about setting up a Pixelfed instance but that seems like overkill. I may use it as an excuse to build something in a language other than PHP because my resume could use that. Nearly all of my non-PHP work has been lost to me because it wasn’t open source.

thought i missed one: oscommerce

i ran across a reference to oscommerce in the slides of a tutorial i presented at o’really oscon in 2002(!) where i ran through of a survey of major php applications, and i thought that meant i had missed one in my round-up of open-source php point-of-sale applications.

but it’s an ecommerce platform, not a point-of-sale system and it doesn’t look like it has a module or add-on to provide a point-of-sale interface.

speaking of that, there are some point-of-sale add-ons for woocommerce, which is itself the ecommerce add-on to wordpress. it looks like the only open-source/free ones are built specifically for use with square or paypal terminals.

titi, a simple database toolkit